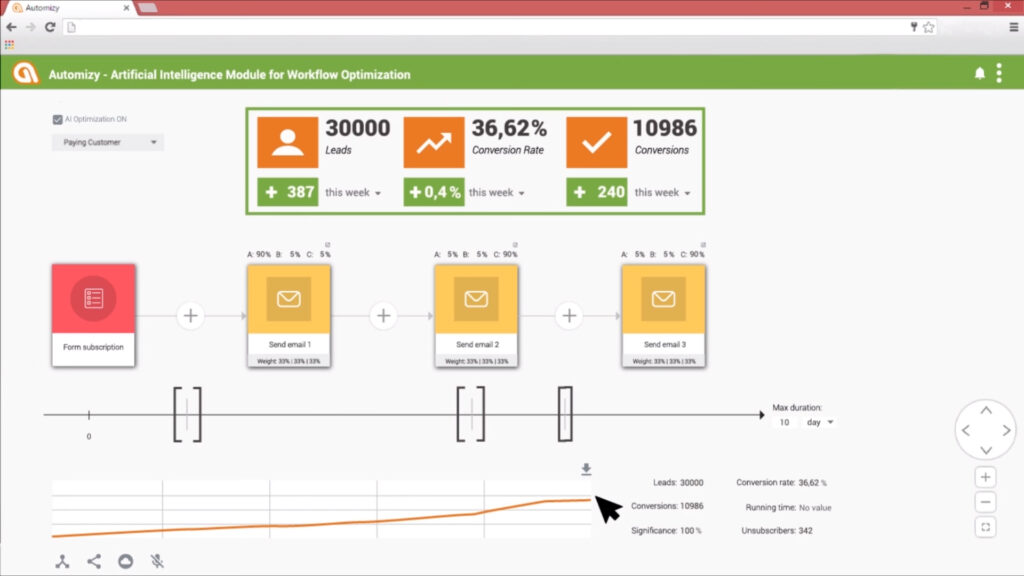

Optimize your email follow-ups automatically: introducing Artificial Intelligence for Marketing Automation

As we discussed before, it is difficult to test how frequently you should send your follow-up emails. In addition, it is nearly impossible to AB test your drip campaigns and your emails in it. Now – as we promised – we share an easy solution. Introducing artificial intelligence for marketing automation that is an automatic solution […]